· Best practices · 9 min read

DRY vs WET: The Database Normalization Trade-off

Why normalization is not always the answer and when denormalization makes sense.

The Textbook Version of Reality

When I was at university, I was taught normalization as a clean, almost mathematical discipline. There was a clear path to correctness: First Normal Form, Second Normal Form, Third Normal Form. Each step removed redundancy, clarified relationships, and brought the schema closer to some idealized notion of truth.

Redundancy was treated as a design failure. Duplication was a smell. If the same piece of information appeared twice, something had gone wrong.

It was, in hindsight, a beautifully consistent model.

It was also, in many cases, completely impractical—an excellent foundation for passing exams and a somewhat less reliable one for surviving production.

Then Production Happened

The very first practical lesson I got about databases was not how to normalize them.

It was when—and why—to stop.

Before this gets misread as heresy, let me be clear: this was not a “normalization is stupid” moment. Quite the opposite. I was lucky enough to have someone more experienced walk me through why normalization matters, what problems it solves, and what goes wrong when you ignore it blindly.

And then, in the same breath, explain why we sometimes abandon it on purpose.

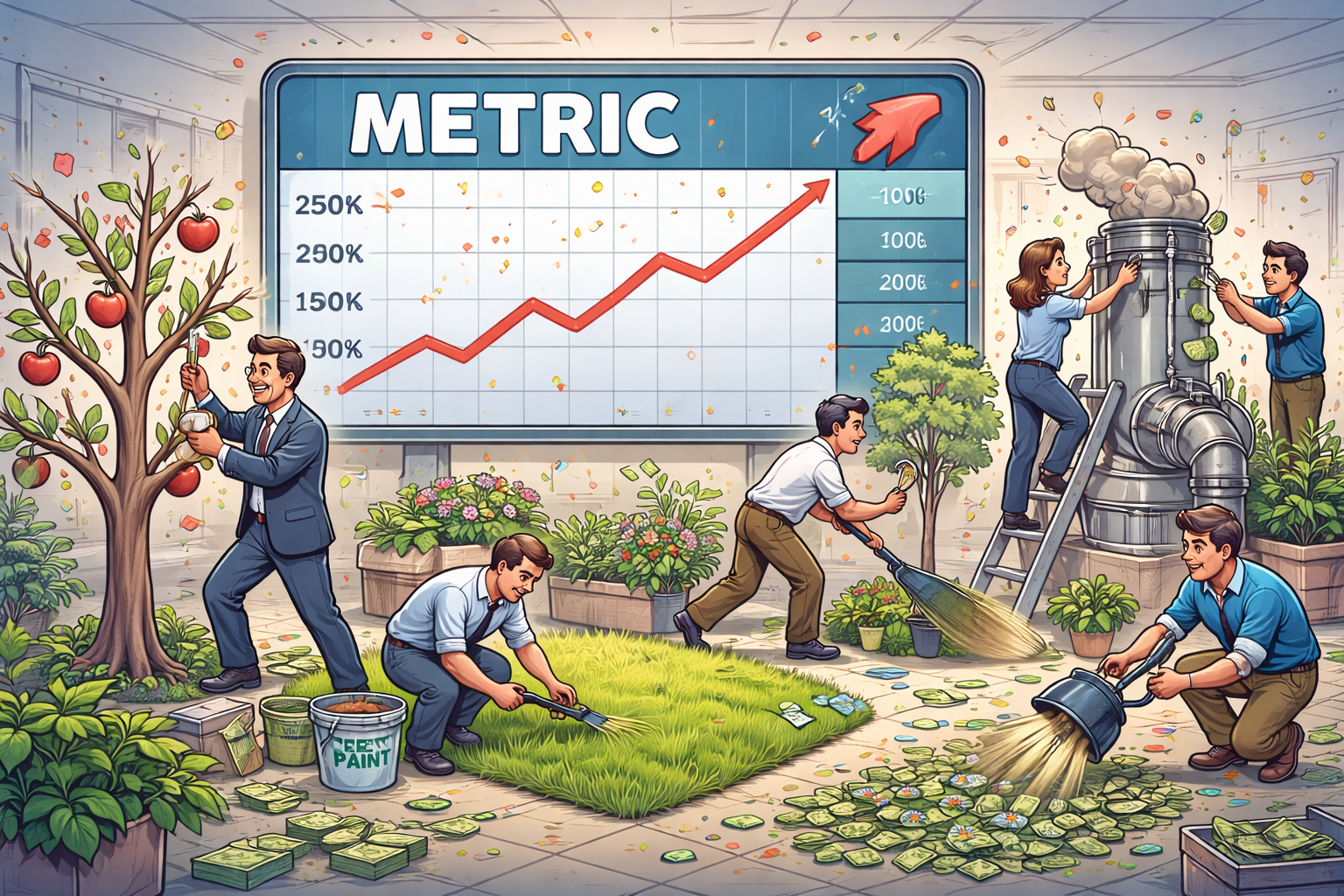

There are plenty of scenarios where denormalization makes sense—performance being the most obvious one. When queries become too expensive, joins too deep, and latency too visible to ignore, duplicating data can be the most pragmatic choice available.

But that does not mean “just add a column because we don’t know what we’re doing.” That is not engineering. That is guesswork with better tooling—and a Jira ticket.

Denormalization without understanding is just as dangerous as blind adherence to normalization. The difference is not in the schema—it’s in the reasoning. If a database architect can justify the trade-off, articulate the risks, and explain the consequences, that’s a decision. If not, it’s a liability waiting for a convenient moment to surface.

In practice, those pristine schemas don’t so much fail as they get negotiated with. Under real load and real constraints, teams start bending the rules—selectively, intentionally, and usually for performance. A join too far becomes a copied field; a slow report becomes a read-optimized table. Not by accident, but by trade-off.

Consistency vs Reality

Normalization optimizes for consistency. Denormalization optimizes for reality—and reality tends to win arguments.

In a perfectly normalized world, every fact lives in exactly one place, and every query reconstructs the desired view through joins. This works beautifully—until it doesn’t. Joins are not free. They carry computational cost, cognitive cost, and often, organizational cost. When data is spread across multiple services or owned by different teams, a “simple join” can turn into a distributed systems problem wearing a SQL-shaped hat.

At that point, duplication stops looking like a mistake and starts looking like a trade-off.

DRY, Misunderstood

This is where the DRY principle quietly enters the room, slightly misunderstood and heavily over-applied. DRY—Don’t Repeat Yourself—was never meant to eliminate all duplication of code or data. It was about avoiding duplication of knowledge. The idea was simple: if you have to change the same concept in multiple places, you have already lost.

Somewhere along the way, that idea was flattened into a rule: duplication is bad. Always. Everywhere. Preferably enforced by linters and strong opinions.

And just like that, we started building systems where avoiding duplication became more important than managing complexity.

This is how you end up extracting a single shared method simply because two pieces of code happen to look similar at one moment in time—despite solving different problems, owned by different parts of the system, and evolving in completely different directions. It compiles. It even passes tests. It will also ruin your next three sprints. It feels like progress. It looks like cleanliness. In reality, it’s often the beginning of a slow, quiet tragedy, where unrelated concerns get entangled and every future change becomes a negotiation.

The Real Enemy: Coupling

The problem is not duplication. The problem is coupling—the kind that politely says “this is fine” until it very much isn’t.

A fully normalized schema minimizes redundancy, but it maximizes dependency. Every piece of data is connected, often transitively, to several others. A change in one place can ripple through the entire system. Queries become fragile. Refactoring becomes risky. The blast radius of a seemingly small modification expands quietly until it surprises everyone involved.

Denormalization, on the other hand, introduces redundancy but reduces dependency. By copying data or logic deliberately, we isolate parts of the system. Queries become simpler. Services become more independent. Changes can be made locally, without negotiating with half the organization or re-evaluating every join in existence.

Of course, this comes at a cost. Now you have to deal with synchronization, eventual consistency, and the uncomfortable question of which copy of the data is “the real one.” You trade one class of problems for another.

But that’s the point. This was never about right versus wrong. It was always about trade-offs.

Abstractions Are Bets

Every abstraction we introduce, every normalization step we enforce, is a bet on the future. We assume that the relationships we model today will remain stable. We assume that access patterns will not change dramatically. We assume that the cost of joins will remain acceptable. These assumptions are often reasonable—right up until they aren’t.

When they break, they do so in production—ideally on a Friday afternoon, for educational purposes.

At that moment, theoretical purity tends to lose the argument.

What follows is rarely elegant. A field is duplicated here, an index added there, a read-optimized table appears to serve a specific use case. Over time, the system evolves into something that would make a database theory professor slightly uncomfortable and a production engineer quietly relieved.

Where WET Wins

On interviews, I like to ask a deceptively simple question: what is the opposite of DRY?

Very few candidates get it right. The expected answer is WET—“Write Everything Twice” or, depending on your sense of humor, “We Enjoy Typing.” That alone filters out how deeply someone has actually thought about the principle, or at least how comfortable they are disagreeing with dogma.

But that’s just the warm-up.

The real question is this: in which area of software engineering is WET not just acceptable, but often the better default?

This is where most people get stuck. There’s a moment of visible discomfort, as if we’ve just questioned something sacred. DRY is supposed to be universally good. Suggesting otherwise feels almost… inappropriate.

The answer is unit tests.

If that sounds wrong, it’s worth pausing for a moment. The purpose of tests is to verify logic. Meanwhile, applying DRY aggressively in tests introduces loops, helper abstractions, conditional branches, parameters, and all the usual machinery we use to reduce duplication in production code.

In other words: we add logic.

And once tests contain logic, a very uncomfortable question appears: who tests the tests? (Hint: you probably won’t like the answer.)

At some point, I’ve seen this spiral into tests for test helpers… for test helpers… for test helpers. Four layers deep. The result was exactly what you would expect: reduced readability, slower iteration, and a team that spent more time understanding the testing framework than the system it was supposed to validate.

WET in tests is not laziness. It is clarity. Repetition in this context is not a failure of discipline—it is a deliberate choice to keep each test independent, explicit, and easy to reason about.

Because when the goal is verification, the last thing you want is another layer of abstraction standing between you and the truth.

Tests are not the only place where this trade-off shows up.

I’ve seen similar arguments around Infrastructure as Code. The default is less obvious here, but the same forces apply: readability, debuggability, and the sheer difficulty of testing complex environments. At some point, trying to stay perfectly DRY leads to monstrous maps, deeply nested variables, and “default values” gymnastics—all just to produce a handful of resources that differ by a single parameter.

And then someone does the unthinkable: Ctrl+C, Ctrl+V. Civilization does not collapse.

A few duplicated virtual machines. A couple of autoscaling groups defined explicitly. Slightly repetitive, yes—but suddenly readable, predictable, and much easier to reason about.

Because just like with tests, the moment your abstraction becomes harder to understand than the thing it generates, you’ve already lost.

Bigger Than DRY or Databases

This observation goes far beyond databases or the DRY principle. It applies to almost everything we learn in engineering.

Best practices are called “best” for a reason. They are not universal, divine rules. They work well in many situations—but not in all of them. There are exceptions. There are trade-offs. There are contexts where the “right” answer changes.

But the existence of exceptions does not excuse ignorance.

Knowing when to break a rule requires understanding why it exists in the first place. Denormalization is a perfect example: it can be a perfectly valid decision—but only if it is a conscious trade-off. You need to know what you are giving up, what risks you are introducing, and why the benefits outweigh them.

Otherwise, it’s not a decision. It’s drift—with better documentation.

This is the uncomfortable truth behind most “best practices”: they are tools, not laws. They guide you—until the moment you have enough context to justify deviating from them.

And that moment only comes if you actually learned them properly.

The Real Lesson

Universities teach us to build correct models. Production teaches us to build survivable systems. The difference between the two is where most of engineering actually happens.

DRY is still a valuable principle. Normalization is still a powerful tool. But neither of them are universal laws. They are strategies, and like all strategies, they operate within constraints.

If your system cannot tolerate a small amount of duplication, it is probably more fragile than you think—and far more confident than it should be.

And if breaking a rule improves the system, then perhaps it was never a rule to begin with.

It was a guideline. One that works beautifully—until reality shows up.