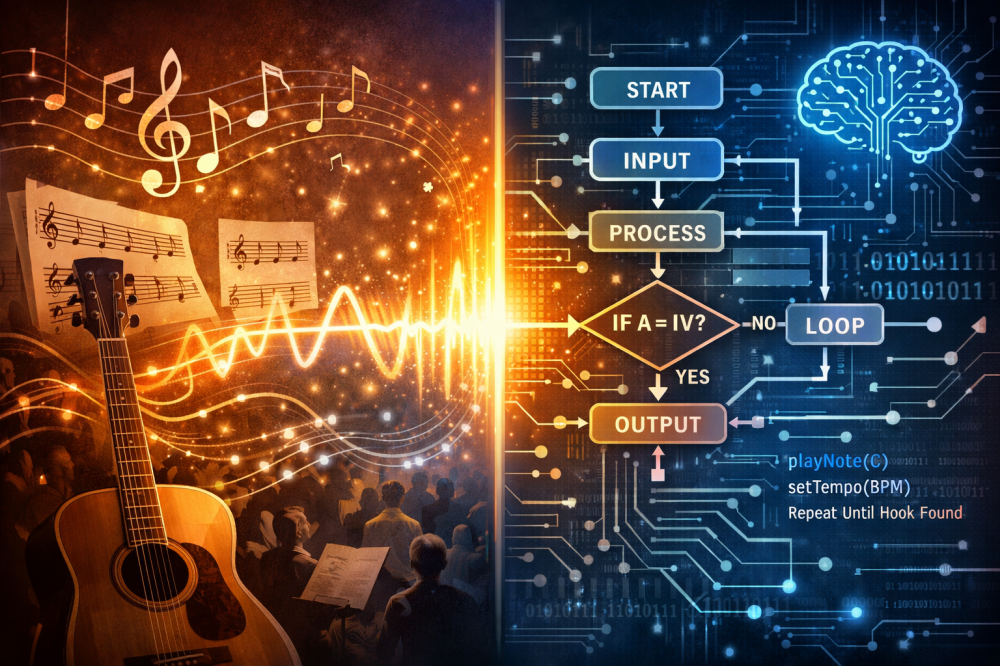

· Systems · 10 min read

Art is Just a Very Slow Renderer: Why Art History is a Low-FPS GPU Benchmark

If we strip away the romantic fluff, art history is nothing more than the evolution of computer graphics—just stretched over thousands of years because the hardware was garbage.

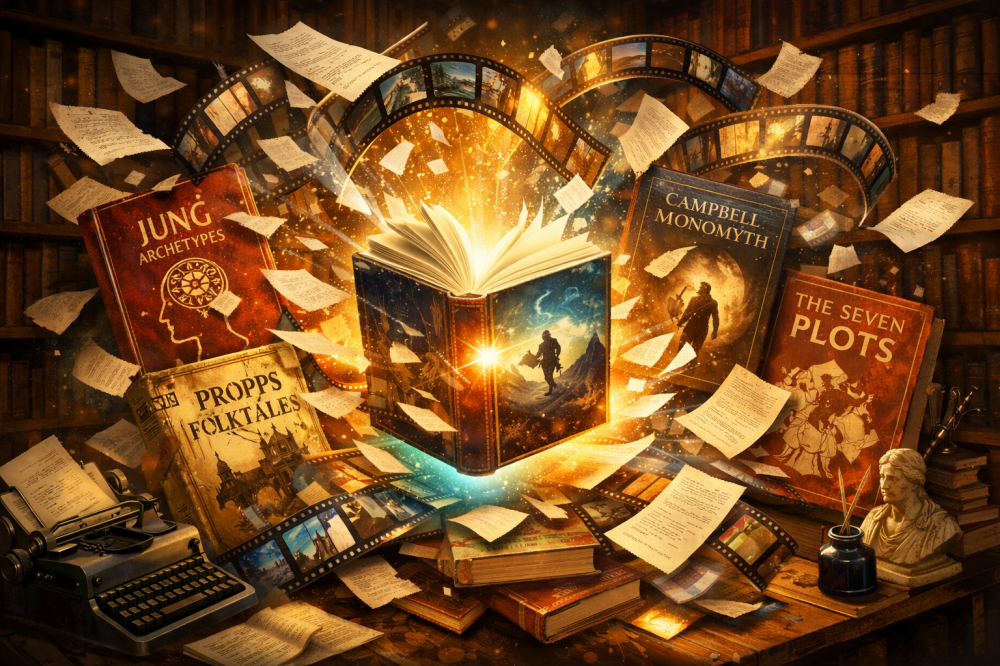

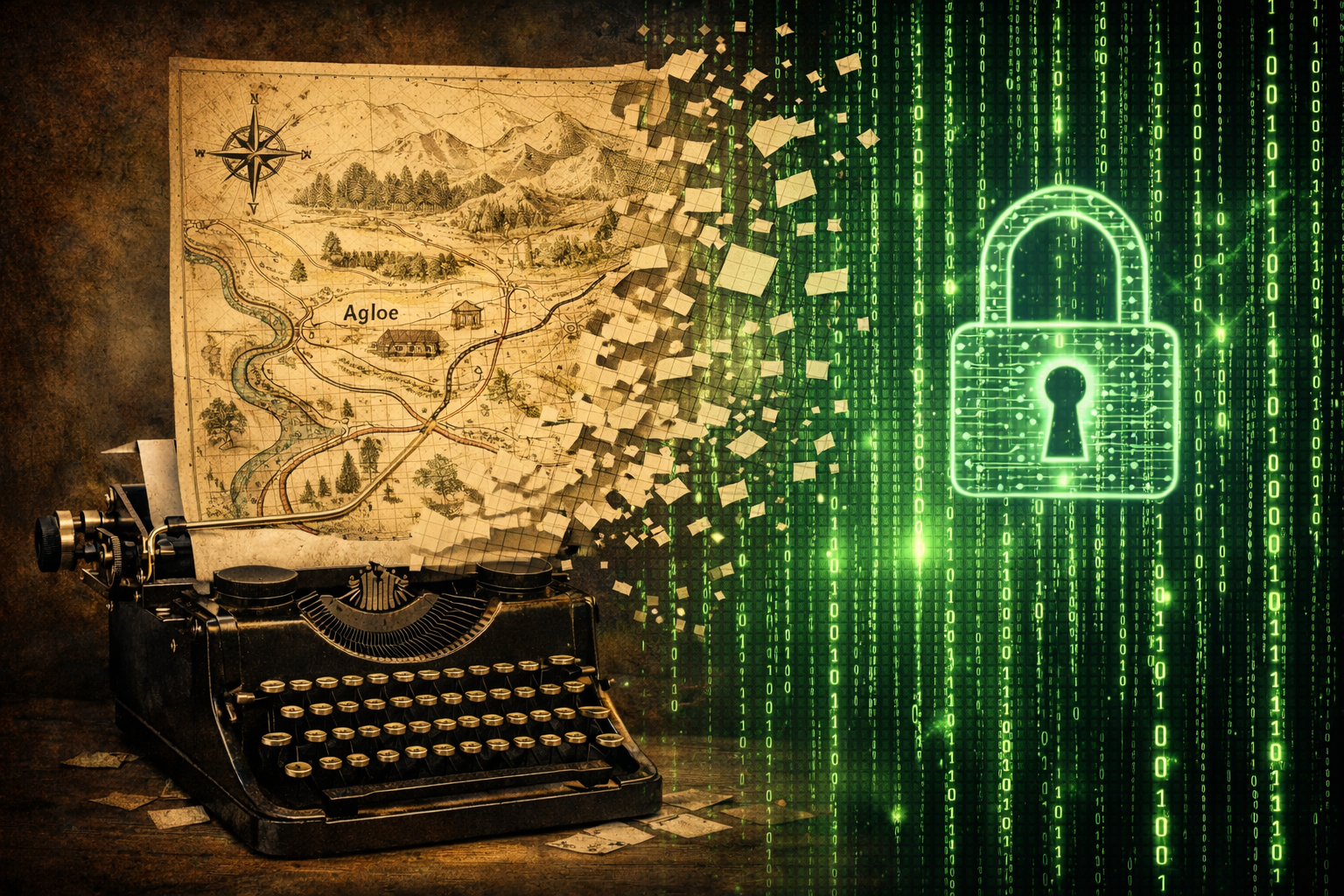

Up until now in this series, we’ve been playing digital archaeologist. We first established that modern data protection is essentially 90% the same tricks used by medieval monks and 17th-century cartographers, just with better marketing and a larger carbon footprint. Then, we dove into the guts of narrative, only to find that a writer’s “inspiration” is often just a savvy choice of the right template from a legacy library of archetypes or some high-level narrative flowchart.

Today, it’s time for the hottest potato of them all: visual arts.

Before we start, a small disclaimer: I know as much about art as a goldfish knows about the internal combustion engine. My understanding of aesthetics ends where the monitor’s user manual begins. But let’s look at this through an engineer’s lens. If we strip away the romantic fluff about the “artist’s soul,” it turns out that art history is nothing more than the evolution of computer graphics—just stretched over thousands of years because the hardware was, frankly, garbage.

We are entering the era of Generative AI and panicking that “the machine is replacing us.” Spoiler: we have always been rendering machines. Our biological GPUs were just painfully slow and suffered from massive latency.

1. Antiquity is Pixel Art (Constraint as Style)

Comparison of an Egyptian fresco with the original Mario (both ChatGPT renders)

Let’s start with the basics. Why do figures in Egyptian frescoes or early medieval icons look so… flat? Did people in 2000 BCE see the world in 2D? Of course not. It’s just that their “render engine” had tragically low bandwidth and zero floating-point support.

This is the exact same problem Shigeru Miyamoto faced in 1985. Why does Mario have a mustache and a big nose? Because at a pixel resolution, it was impossible to draw a mouth or facial expressions without turning him into a glitchy mess. The mustache was a “hard-coded hack” for readability.

In antiquity, the painter wasn’t fighting for photorealism because the “computational cost” of rendering shadow and depth across a temple wall was too high for the available tech. Art back then was a database of symbols. A god had to be as recognizable as an icon on your desktop. Egyptian perspective is effectively the Pixel Art of antiquity—unoptimized symbols designed for the low resolution of contemporary dyes and limited labor-hours.

2. The Renaissance: The First “3D Engine”

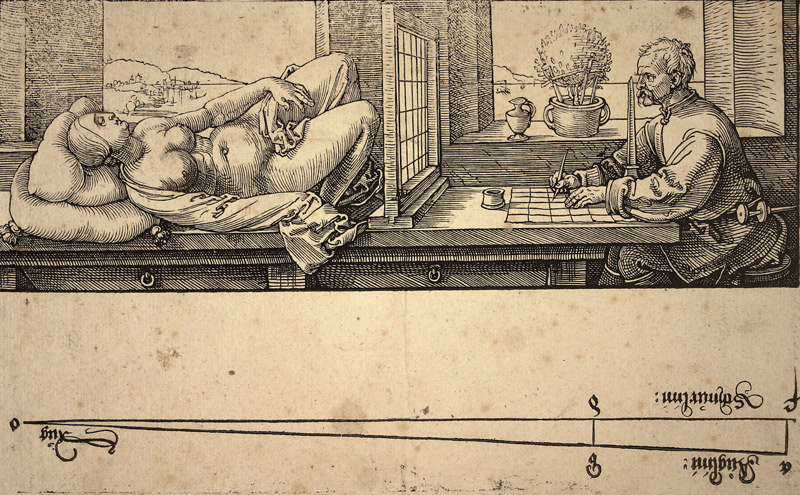

Albrecht Dürer’s “The Painter’s Perspective”

The real breakthrough came in the 15th century. Filippo Brunelleschi and his crew didn’t just “discover beauty”; they implemented the first standardized graphics library—linear perspective.

In engineering terms: the Renaissance was the moment we transitioned from 2D sprites to a 2.5D environment, and then full 3D. Convergent perspective is nothing more than a rigorous mathematical algorithm used to project points in 3D space onto a 2D plane.

Look at Albrecht Dürer’s engravings showing artists using specialized frames and strings to plot perspective. That wasn’t inspiration; it was a manual vertex shader. It was mechanical labor—performing the calculations that your graphics card now does billions of times per second. Brunelleschi was essentially a Lead Architect who wrote the first robust “render-to-texture” logic for reality.

3. Impressionism: The Ray Tracing War

Monet’s series of the Rouen Cathedral

Fast forward to the 19th century. Once the “3D Engine” was perfected and became boring, artists started looking at physics. Impressionism was the moment we turned on the lighting effects and post-processing.

If you look at Monet’s series of the Rouen Cathedral, where he painted the building 30 times, he wasn’t “painting a church.” He was stress-testing the Global Illumination of the sun. He turned off anti-aliasing (hence the blurry edges) and cranked up the HDR.

Impressionists realized that shape is secondary to light physics. They stopped rendering polygons and started rendering photons. In modern terms, they switched from a rasterized engine to a Ray Tracing model, focusing on how light bounces off surfaces in real-time. It was high-latency, high-cost, but it looked like nothing before it.

4. Cubism: Multi-Viewport Rendering and RAG

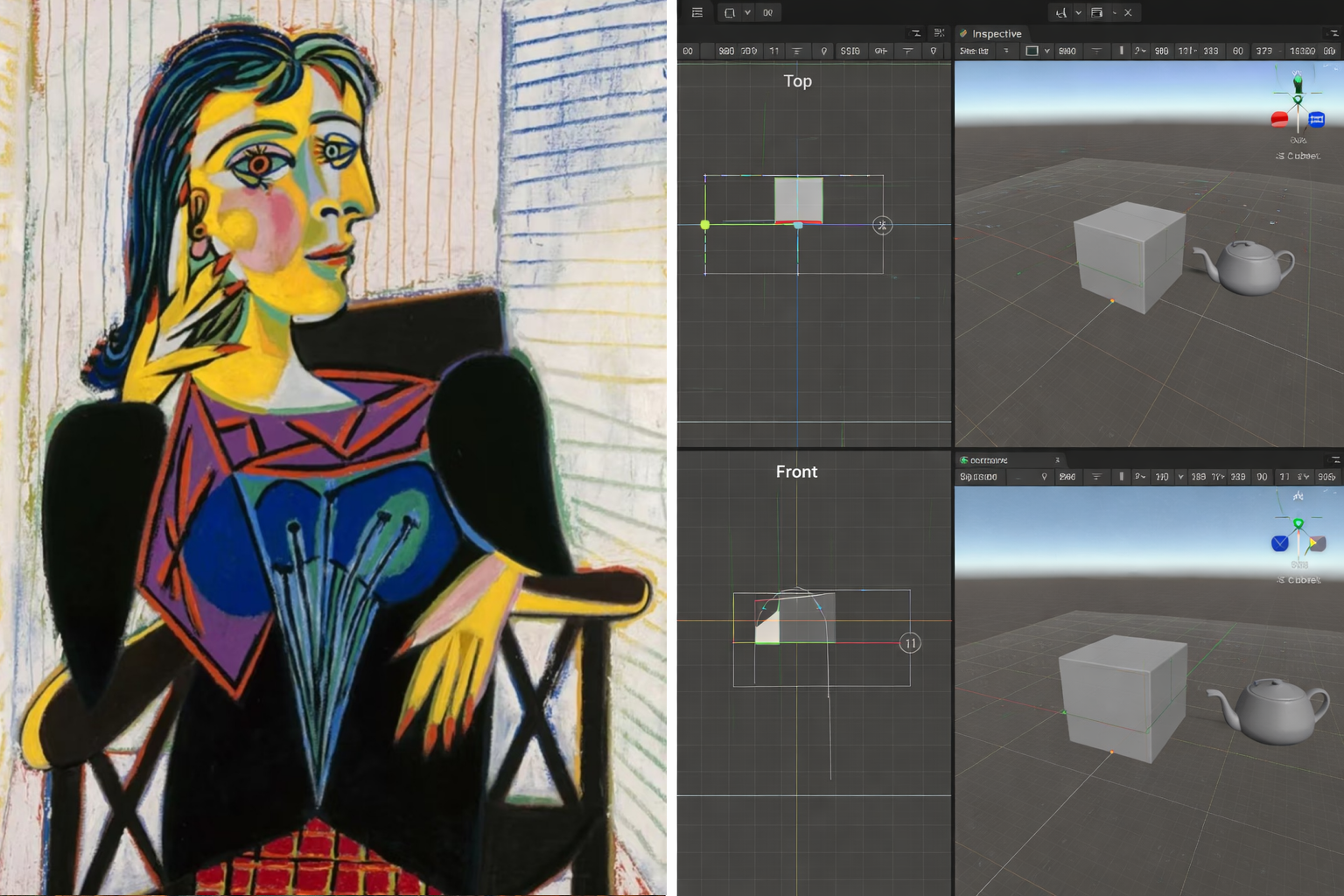

Portrait of Dora Maar (Picasso, 1936) vs Unity engine (ChatGPT render)

By the early 20th century, the “perfect render” had been achieved and commodified. So, artists like Picasso and Braque decided to break the camera and experiment with the data structure.

Cubism was essentially a multi-viewport rendering hack. Instead of looking at an object from one angle (a single camera call), the artist renders the front, the profile, and the top-down view all in the same frame. It’s exactly what you do when you’re debugging a 3D scene in Unity—viewing the wireframe from multiple perspectives to verify the geometry.

In AI terms, it’s an early prototype of RAG (Retrieval-Augmented Generation). The artist “retrieves” patches of visual data from different “locations” (viewpoints) and synthesizes them into a single, high-information composite. It’s no longer about a snapshot; it’s about a multi-source data aggregation that carry more semantic meaning than a single, “accurate” frame.

5. Photography: The Zero-Cost Renderer

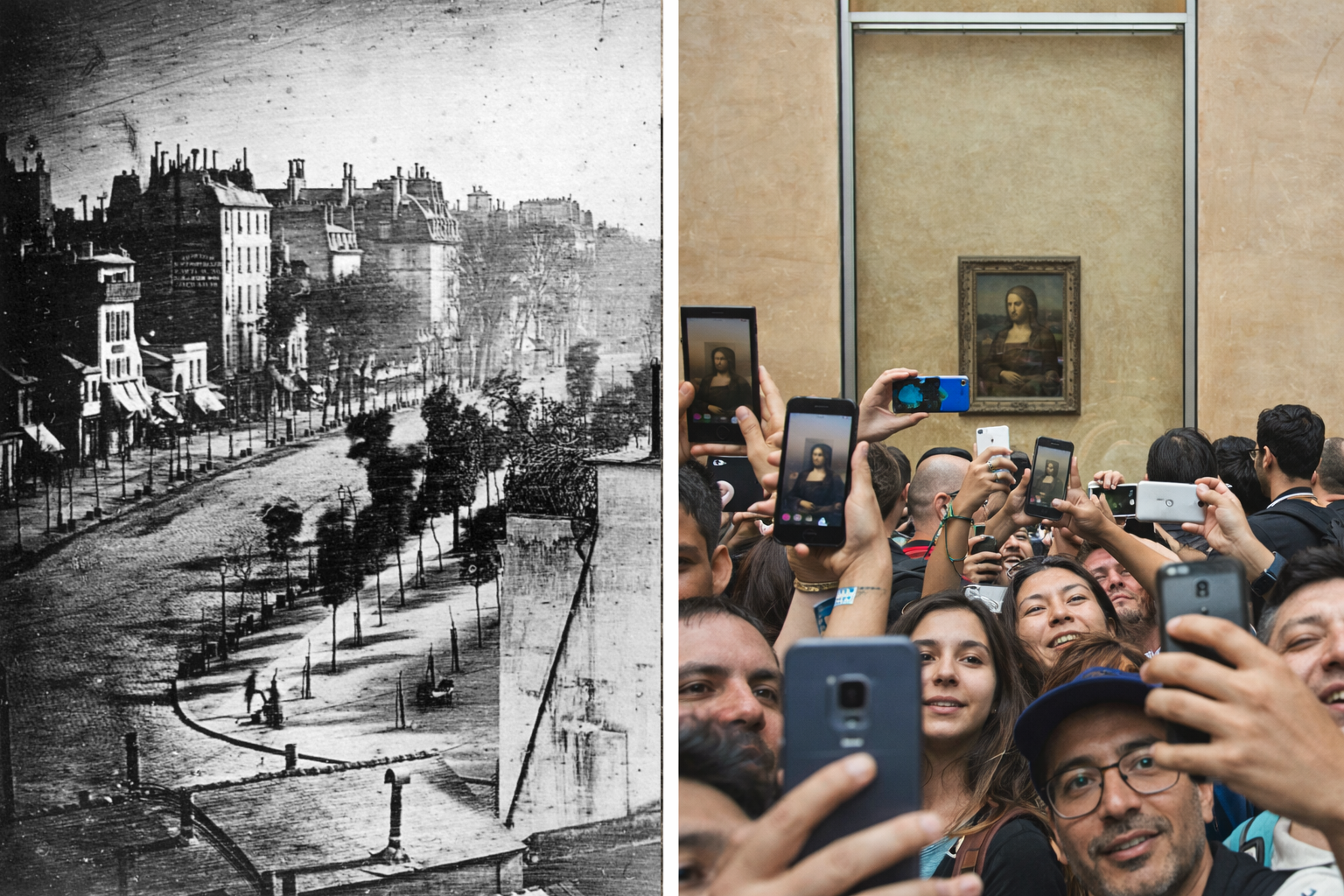

fragment of Boulevard du Temple (Louis Daguerre, 1838) vs Tourists (ChatGPT render)

In 1839, the industry had a collective heart attack. The daguerreotype arrived. For the first time, you could “render” a scene with zero manual CPU cycles.

The initial discourse was brutal. Critics dismissed it as a purely chemical and mechanical process, void of human spirit. “The end of art” was a common headline long before we had Twitter threads about Midjourney. But today, no one questions photography’s status as a fine art. It survived the panic because it forced a redefinition of authorship. If the machine does the “rendering,” the artist’s value shifts to composition and curation.

Every tourist with a smartphone today has more “render power” in their pocket than a 19th-century master could dream of. Yet, carrying an iPhone doesn’t turn every traveler into Henri Cartier-Bresson or Robert Capa. The “click” has been commodified to zero; the human choice remains the only scarce resource.

6. Andy Warhol: The Industrialized Selection

Marilyn Monroe Complete Portfolio (Andy Warhol, 1967) vs Tourists (ChatGPT render)

If photography democratized the “click,” Andy Warhol industrialized the “intent.” Warhol was famously obsessed with removing the “human touch”—specifically the unique, expressive brushstroke—from art. He wanted to be a machine, and he wasn’t joking.

His studio, aptly named “The Factory,” was a human-powered inference engine. He employed a rotating cast of assistants and interns to execute the actual manual labor. In many cases, Warhol didn’t even touch the hardware. He acted as the ultimate System Administrator, managing the selection of datasets (Marilyn Monroe, Campbell’s soup cans) and the configuration of the production pipeline.

In essence, Warhol was the grandfather of Prompt Engineering. He didn’t paint; he issued commands. He understood that in a world of mass production, the “art” isn’t in the execution—it’s in the command line. I don’t exactly follow art periodicals, but I don’t recall a massive consensus claiming that Warhol wasn’t an artist just because he outsourced the manual ‘brushstroke’ factor to his interns.

Today, we might type:

Hey Midjourney, draw me a picture of a dragon fighting with Godzilla with Power Rangers playing Twister in the background, in the style of a 1960s screen print.

Godzilla vs Dragon (Gemini 3 render - yes, I cheated by not using Midjourney).

Warhol did the same thing, just with a slower latency. He provided the concept, the source material, and the parameters, then waited for his “Factory” to return the output.

7. Bauhaus: The Design System Protocol

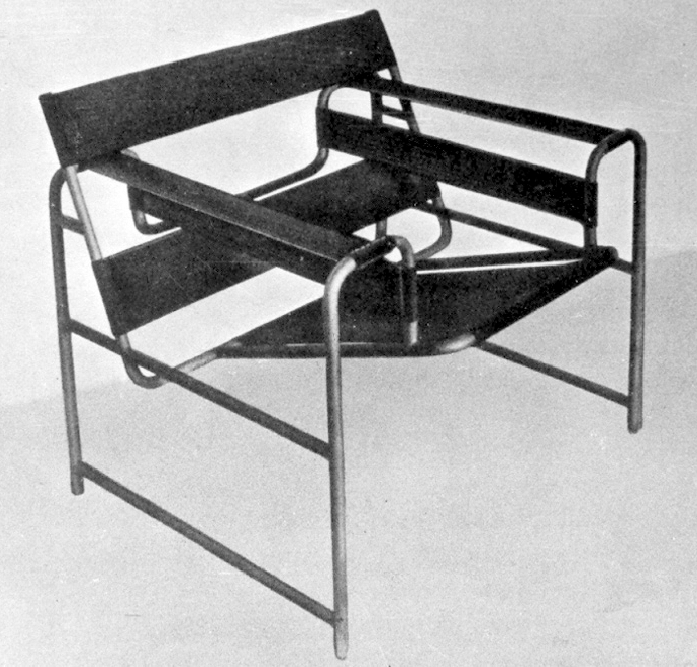

Wassily Chair (Marcel Breuer, 1925)

While one half of the art world was busy screaming about the existential threat of photography, and Warhol was wandering through supermarkets trying to decide between a can of soup or a press photo of Marilyn from the movie Niagara, a completely different process was unfolding. While the “front-end” was fighting over curation and clicks, the Bauhaus movement was busy reinventing the architecture.

If Warhol was the UI, Bauhaus was the Design System. Before them, furniture and architecture were “legacy code”—messy, ornamental, and unoptimized. Walter Gropius and his team didn’t care about “pictures”; they were building a universal design protocol.

Take the Wassily Chair. It’s not a “throne” meant to show status; it’s a physical implementation of a sit-down function optimized for mass production. They stripped away the ornamentation to find the most efficient way to communicate utility. Bauhaus was the first to treat visual language like a modular code library—a set of standardized, reusable components ( for the physical world) that ensured consistency across the entire human environment.

8. Malevich: The Supreme Black Box

While Bauhaus was optimizing the “how,” Kazimierz Malevich was breaking the “what.” His Black Square is the ultimate Black Box.

To a casual observer, it’s a 1-bit output ( or ). But suprematism was about the “hidden logic”—the rejection of function for the sake of pure feeling.

A quick detour from a math psycho who’s trying (and sometimes failing) to function in human society: personally, I think this is a lot like complex numbers. Back in the 18th and 19th centuries, they were considered a totally useless tool for torturing students. Then electronics happened, and it turned out they are the only way to model the world. You basically can’t design modern circuits without stepping into the complex plane during calculations.

Sure, the final result should be in real numbers (the voltage at the output), but the process of getting there is, well, complex (pun intended). It’s exactly like Malevich’s journey to his black square (or how a model like Nano Banana arrives at a picture of a rubber ducky): X-rays show layers of painted-over compositions underneath Malevich’s square. It’s a perfect metaphor for the hidden layers of a neural network: the final result is minimalist, but the “computational” weight of the ideology and the layers of training data underneath is massive.

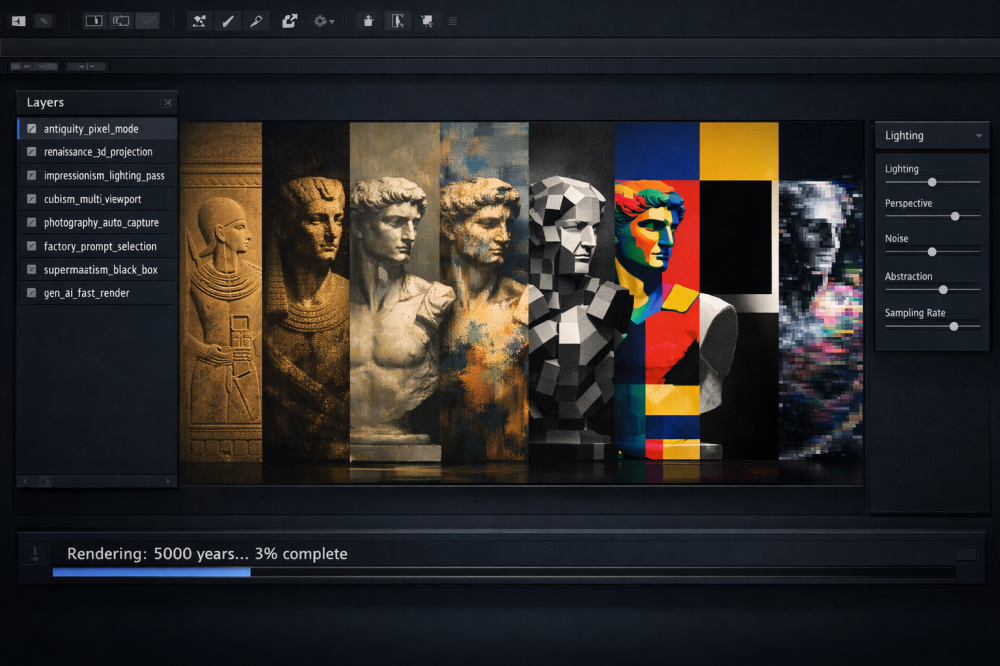

Wrapping up: How History Rhymes

Summary of art evolution (Gemini 3 render)

Once you begin to see these historical parallels, they become impossible to “unsee.”

- Antiquity: Hard-coded symbols for low-bandwidth mediums (Pixel Art).

- The Renaissance: The first standardized 3D-to-2D vertex shader.

- Impressionism: Stress-testing Global Illumination and light physics.

- Cubism: Multi-viewport RAG data aggregation.

- Photography: The disruption of the first zero-cost rendering pipeline.

- Warhol’s Factory: Industrialized curation (Prompting phase).

- Bauhaus & Malevich: The systemic optimization of design and the supremacy of the black box.

Looking at it this way, it’s hard not to feel that the entire history of computer graphics evolution looks as if someone hit “fast-forward” on art history. Even the current discourse regarding generative AI has its place: we are roughly at the “Warhol with a camera” stage—everyone has the tools, artists are screaming, and no one knows what art will be or look like in five or ten years.

We keep telling ourselves that “intent” is the final human bastion. But history rhymes, and the rhyme is a warning. Walter Benjamin noticed almost a century ago that once reproduction becomes trivial, the “aura” of art migrates elsewhere. Arthur Danto later argued that what separates art from object is not execution, but context. And Gombrich quietly dismantled the myth of pure originality by showing that artists don’t copy reality — they operate through learned schemas. In other words: the sacred keeps moving.

Every time we automate a layer of the creative process, we declare the remaining fragment the “true” art. We automated the line, then perspective, then light, then the rendering itself. Each time, we retreated one level up the abstraction stack and called it human essence. Now we are left holding “intent.” But in an era of algorithmic prediction and probabilistic models, even intent may not be a mystical core. It may simply be the next layer in a very long pipeline — another set of parameters waiting to be tuned in someone else’s prompt.

Next up

A friend told me that my piece on narrative shattered her perception of literature. I suspect this one might cause a similar level of discomfort for a fair share of people. But just so no one feels left out—before I attempt to wrap up my ramblings—in the next installment, we’re going to take a look at music (spoiler: it’s potentially even easier to decompose)… :>

Art & Perception

The structural and psychological foundations of visual representation.

3 sources

Art & Perception

The structural and psychological foundations of visual representation.

- Art and Illusion: A Study in the Psychology of Pictorial RepresentationGombrich, E. H. 1960

The definitive source on how "style" is actually a set of learned schemas.

- Perspective as Symbolic FormPanofsky, E. 1991

Analyzes perspective as a mathematical and cultural construct.

- The Mirror, the Window, and the TelescopeEdgerton, S. Y. 2009

Modern Movements & Mechanical Reproduction

The shift from representation to systems and curation.

6 sources

Modern Movements & Mechanical Reproduction

The shift from representation to systems and curation.

- The History of ImpressionismRewald, J. 1973

- Cubism: A History and an Analysis, 1907-1914Golding, J. 1959

- The Work of Art in the Age of Mechanical ReproductionBenjamin, W. 1935

Crucial for the photography and "zero-cost renderer" argument.

- The Transfiguration of the CommonplaceDanto, A. C. 1981

Explains the shift from object to context in Pop Art.

- Bauhaus: 1919-1933Droste, M. 2002

- The Non-Objective WorldMalevich, K. 1927

The "technical documentation" for the Suprematist black box.