· Systems · 10 min read

The Algorithm Behind the Melody: Music Was Always Code — We Just Didn't Have the Debugger

When the comedy group Axis of Awesome performs their famous "4 Chords" medley, the audience usually goes through two distinct phases.

When the comedy group Axis of Awesome performs their famous “4 Chords” medley, the audience usually goes through two distinct phases. First, there is the laughter—the shared joy of recognizing that forty of their favorite pop songs are, in fact, the same song. But then, a subtle, lingering discomfort sets in. Forty hits, three minutes, and one single harmonic skeleton ().

Axis of Awesome - 4 Chords Medley

The laughter is a defense mechanism. We laugh because we’ve just seen the wires holding up the ghost. For a brief moment, the “divine inspiration” of the songwriter is revealed to be nothing more than a very efficient, very repeatable exploit of human psychoacoustics. If it feels like a heist, it’s because it is. But the thieves didn’t start with Spotify; they started with Pythagoras.

The First Breaking Change

We like to think of music as a language of the soul, but history suggests it’s actually the oldest algorithm we never bothered to document properly. Around 500 BCE, Pythagoras—the man who ruined middle school for everyone—discovered that pleasant musical intervals correspond to simple numerical ratios. If you pluck a string and then shorten it by exactly half, you get an octave. It’s a 2:1 ratio. No magic, just arithmetic. This was the first “breaking change” in music history, shifting music from the realm of the gods to the realm of the calculator.

The Accumulation of Design Patterns

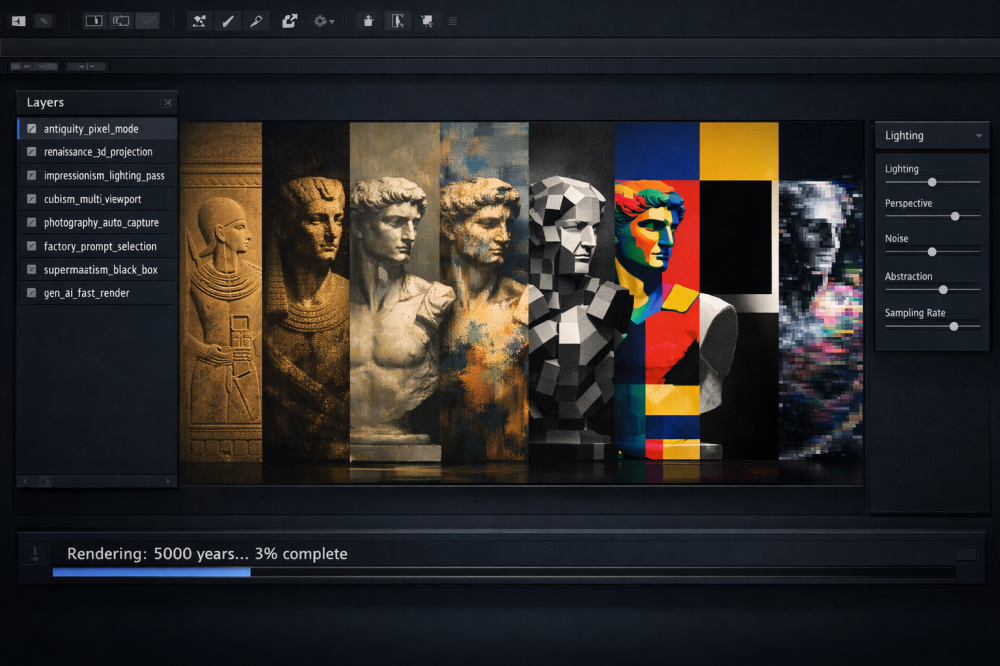

Ever since, Western music has been a slow, steady accumulation of what devs could call “best practices.” Much like the evolution of computer graphics is a story of stacking new rendering techniques to hide the limitations of the hardware, the history of music is a sequence of identifying new linting rules for the ears.

By the time we reached the medieval period, the Church had essentially published the first serious style guide. We call it Gregorian Chant, but in practice, it functioned as a restricted API for sound—a system where only a single, unaccompanied melody line was permitted, governed by a set of rigid “if-then” statements. In this environment, you couldn’t just wander the scale; every move had to follow a predefined modal protocol. No awkward leaps were allowed, and the Tritone—the infamous “Devil in music”—was effectively a forbidden character that would break the entire script. It was a spiritual firewall designed to ensure that the musical “message” was delivered without any emotional “noise” or interference from the composer’s ego. If your melody didn’t resolve according to the documentation, it wasn’t just bad art; it was a bug in the cosmic order.

Centuries later, the system evolved to handle multi-threaded processing. Baroque composers like Bach didn’t just write tunes; they wrote voice-interaction algorithms. Counterpoint is essentially a set of concurrency rules designed to prevent “race conditions” because of independent melodic lines. Bach wasn’t just a composer; he was a high-level language compiler for the Baroque ear, ensuring that multiple threads of sound could run simultaneously without throwing a dissonance error.

As we moved into the industrial era, the romance of the “lonely genius” finally collided with capitalism. The process was no longer about a single mind wrestling with the infinite; it was about building a production pipeline.

On a single street in New York known as Tin Pan Alley, songwriting became a literal factory floor. Composers sat in piano-filled cubicles churning out melodies while “song pluggers”—the 19th-century version of growth hackers—performed them in department stores to gauge real-time public reaction. Musical motifs were treated as proprietary, reusable assets: if a hook sold sheet music, it was tagged as a “best practice” and recycled until market saturation.

By the 1960s, Motown had taken this industrialization and turned it into an ISO-9001 certification. Berry Gordy, a former Ford assembly-line worker, explicitly applied the Detroit automotive philosophy to the “Motown Sound.” He didn’t just want hits; he wanted a standardized output that could be reproduced at scale. He instituted weekly “Quality Control” meetings where songs were critiqued with the cold detachment of engine parts on an inspection line. If a track didn’t have a “hit” profile within the first ten seconds, it was sent back for refactoring. It was formulaic, standardized, and arguably the most successful deployment of a musical template in history.

The Analog Plotter

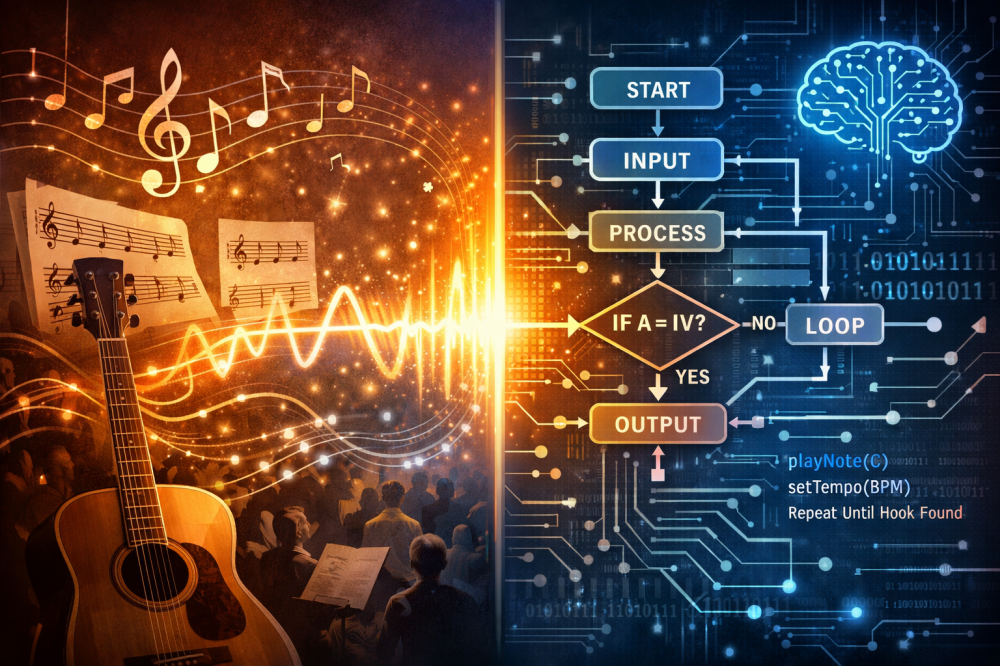

At this point, we need to enter a territory that usually triggers one of two reactions: a quiet, intense nerdgasm for a select few, or a full-blown case of high-school math PTSD for everyone else. If you still have nightmares about and axes, I apologize in advance. But if we strip away the historical baggage and the romantic sighs, a melody looks suspiciously like something you’d find in a dusty Excel spreadsheet.

In cold, mathematical terms, a melody is merely a function: . Time is your domain, and pitch is your range. That’s it. Musical notation—with its elegant curls and dramatic ink—is essentially just a 2D graph that we’ve collectively decided to dress up to make it look more “artistic.” When you see a violinist pouring their heart out on stage, what you’re actually witnessing is a very expensive analog plotter converting discrete data points into a continuous curve of air pressure. It’s less “divine inspiration” and more “real-time data visualization.”

Now, once we accept this iconoclastic—and for many, probably blasphemous—vision of music as a mathematical function, things get truly interesting. Because once you have a function, you can do all sorts of strange things to it. I’m not talking about trivial tasks like finding zeros or identifying a local maximum; those are merely tools serving a much larger, more cynical purpose. In the cold halls of mathematics, we speak of interpolation, approximation, and estimation. Let’s skip the strict definitions. The uncomfortable truth is that composing music is a process disturbingly—even for me—similar to numerical approximation.

A composer tries to approximate a fuzzy, continuous emotional trajectory using discrete steps—notes, rhythms, and keys. It is a numerical method for feelings. We use a 12-note scale (a lossy compression format, if there ever was one) to try and hit a “local optimum” in the listener’s brain. Music doesn’t express emotion; it approximates the shape of emotion until the brain is convinced the two are the same.

Engineering the Hook

Today, the industry has stopped pretending. Producers like Max Martin have turned songwriting into a science so precise it borders on the clinical. They monitor “hook density” and verse-to-chorus transitions with the same rigor an engineer uses to test a bridge’s load-bearing capacity. They aren’t “writing songs”; they are optimizing a fitness function for human attention. They know exactly how many milliseconds of silence the average teenager will tolerate before reaching for the “skip” button.

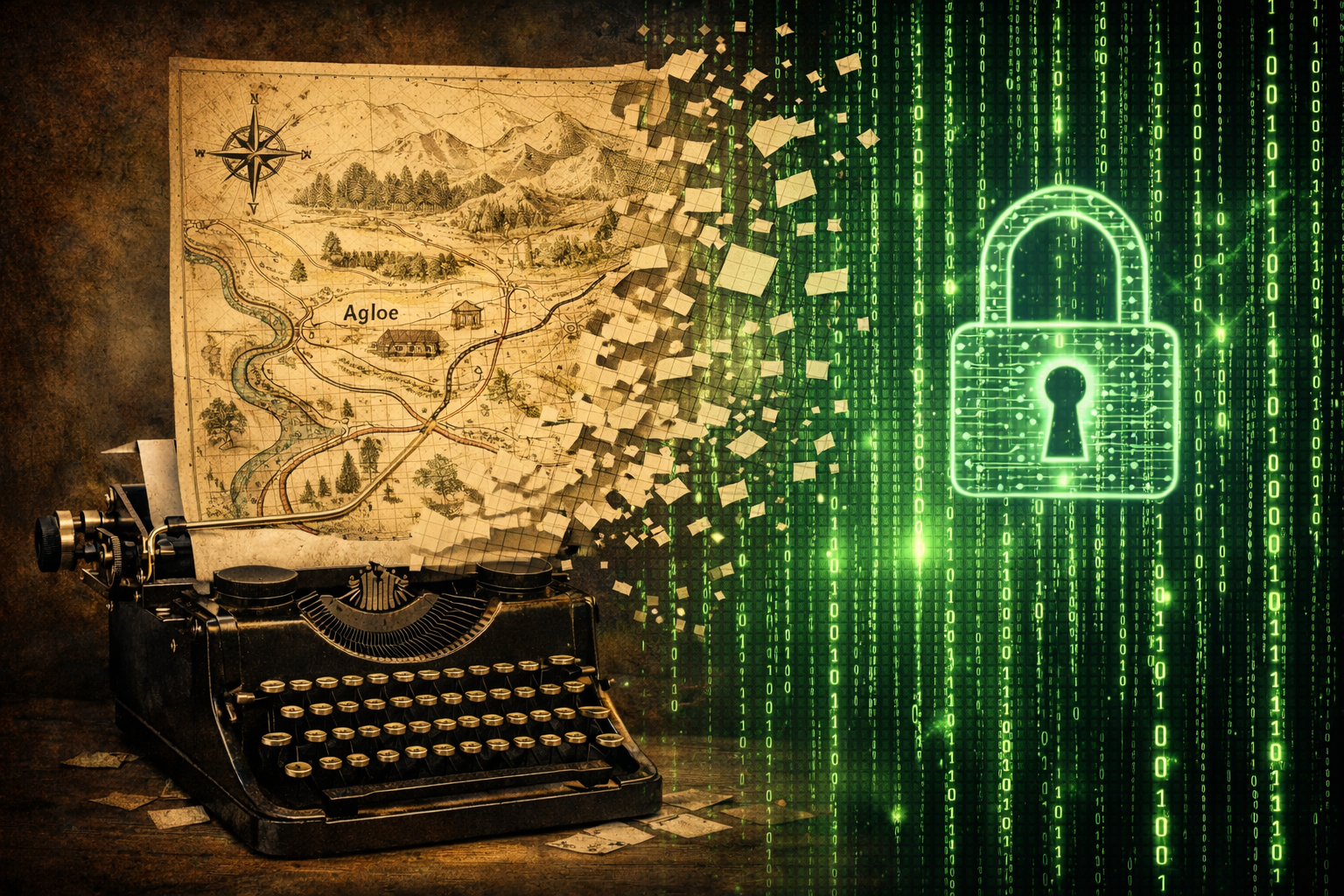

The 1981 Spoiler

The current panic over “AI-generated music” ignores the fact that we already solved this forty years ago. In 1981, David Cope wrote a program called EMI (Experiments in Musical Intelligence). He didn’t use neural networks or massive GPUs. He used pattern extraction and recombination rules based on the very design patterns we’ve been accumulating since Pythagoras.

The results were so convincing they triggered what can only be described as a collective nervous breakdown in the musicological community. When Cope played a piece “in the style of Bach” composed by EMI, listeners were moved to tears. One pianist famously praised the “deep soul” and “profound emotional intent” of a particular sonata—only to fall into a state of shocked silence when Cope revealed it was the output of a piece of software running on a machine that didn’t even have a pulse.

Even Douglas Hofstadter, the man who practically wrote the bible on the intersection of logic and art in Gödel, Escher, Bach, was visibly shaken. For years, he argued that it was “impossible” for a machine to truly create, yet EMI was hitting all the right notes. It was music’s “photography moment”: the realization that the thing we believed required a human spirit was actually just a sophisticated statistical signature. Cope proved that “style” is just an algorithm we haven’t finished reverse-engineering yet.

The Telemetry of Taste

The algorithm has now moved from the composer’s desk to the listener’s pocket. If the history of music was a collection of “best coding practices,” the streaming era is the moment we finally got our hands on the testing data.

Streaming platforms have turned musical taste into a giant, real-time telemetry operation. Every time we interact with a track, we provide data points that were previously invisible. They track skip rates to measure immediate rejection, completion rates to gauge sustained engagement, playlist placement to understand social utility, and replays to identify the dopamine loops that signal a “hit.”

In effect, we’ve moved from constructing functions to evaluating them with surgical precision. This is why giants like Spotify or the creators of the Music Genome Project and Hit Song Science (which was already predicting success via mathematical modeling back in 2003) were able to build such effective evaluation tools. With this much telemetry, we no longer have to “guess” the coordinates of the emotional curve we are trying to approximate. We have a high-resolution heatmap of what the audience actually tolerates.

De-obfuscating the Magic

Once you strip the magic away and look at music through the lens of data-driven evaluation, it becomes much easier to understand why modern AI is so effective in this field. Whether we like it or not, generative models are quite literally built to optimize functions across massive, high-dimensional datasets. They aren’t “feeling” the music; they are just very, very good at precision curve-fitting.

This realization—that our most profound emotional experiences are the result of a well-optimized function—is a bitter pill. It feels like finding out your favorite childhood magician was just using a double-bottom box. But this is exactly where the perspective shift happens.

Music always felt magical because we experienced the output without seeing the source files. AI didn’t invent algorithmic music; it simply removed the illusion that there was no algorithm there in the first place. It forced us to see the sleight of hand.

But here’s the thing about magic: knowing how the trick is done doesn’t make the performance any less impressive. It just shifts our appreciation from the result to the execution—to the skill required to manipulate the “code” of our emotions so convincingly. And as shows like Penn & Teller: Fool Us remind us, even those who understand every sleight of hand in the book can still be caught off guard by a truly brilliant performer. We might understand the repertoire of musical tools better than ever before, but that doesn’t mean we’ve reached the end of the map. There is still plenty of room for us to be surprised.

Next Up: The Post-Mortem

In the next installment, we’ll reach the end of this particular roadmap. After looking at digital ghosts, blank pages, slow renderers, and musical functions, we will attempt to summarize this entire cycle and see where we actually stand in this algorithmic landscape.

In the meantime, if you enjoyed these cynical ruminations, consider subscribing to the newsletter. It’s where I share other—usually slightly lighter—thoughts on systems, tech, and the strange ways we try to remain human in a world made of code.

Suggested Reading / References

6 sources

Suggested Reading / References

- Virtual Music: Computer Synthesis of Musical StyleCope, D. 2001

- Gödel, Escher, Bach: An Eternal Golden BraidHofstadter, D. R. 1979

- This Is Your Brain on Music: The Science of a Human ObsessionLevitin, D. J. 2006

- The Song Machine: Inside the Hit FactorySeabrook, J. 2015

- The Creativity Code: How AI Is Learning to Write, Paint and ThinkDu Sautoy, M. 2019

- How Music WorksByrne, D. 2012