· Systems · 5 min read

The Obsession With Measurement

Why we measure everything and why it's not always a good thing.

The Obsession With Measurement

We have a deep, almost obsessive need to measure reality. Not just observe it, not just understand it — measure it, quantify it, turn it into something that fits neatly into a dashboard or a quarterly report.

And when reality refuses to cooperate, we don’t give up.

We adapt the model. We redefine the problem. We find something adjacent, something easier, something measurable.

If something cannot be measured, it becomes uncomfortable. Hard to discuss, hard to defend, and almost impossible to justify in a room full of people staring at numbers.

So we don’t argue with reality.

We replace it.

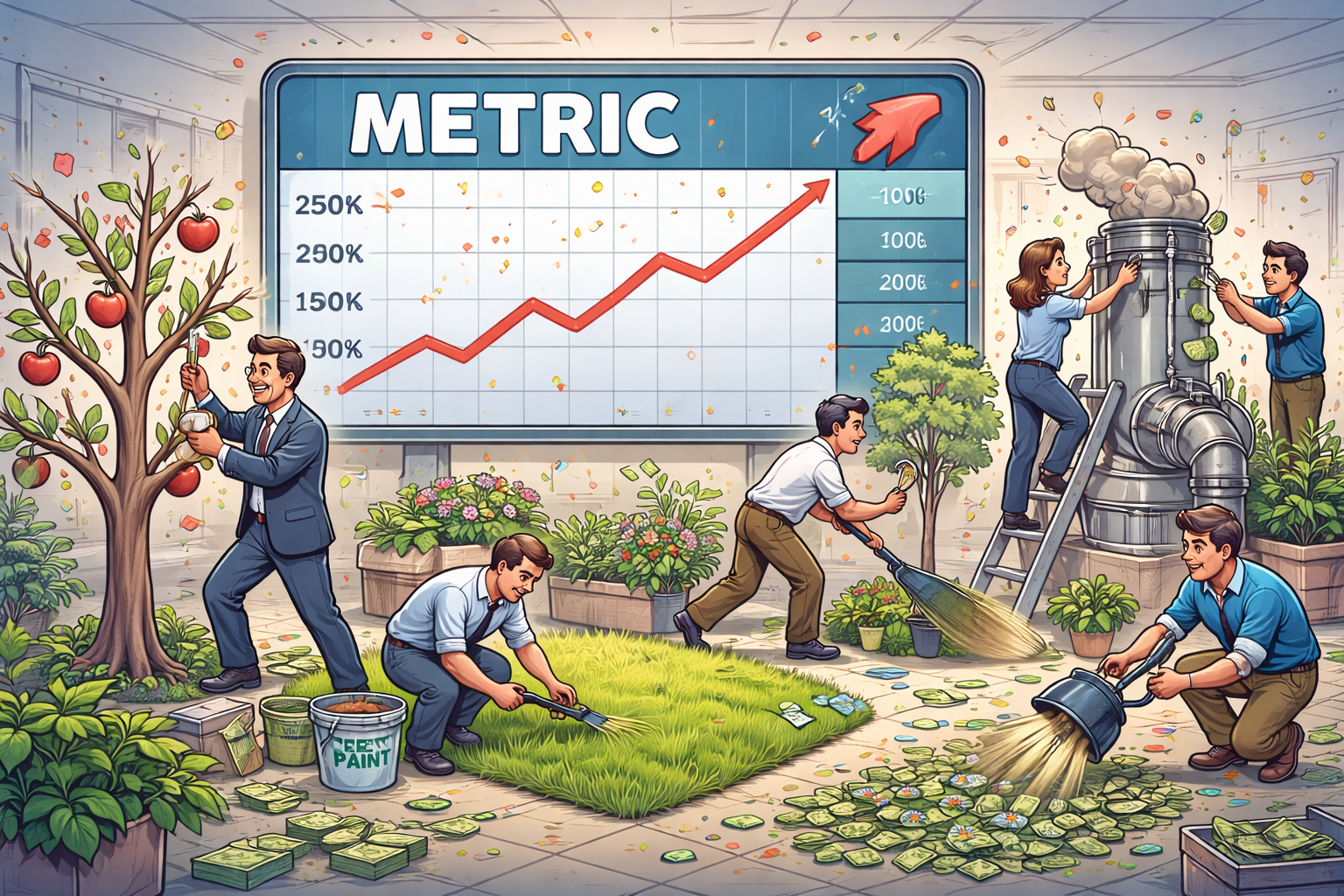

Proxy Metrics: The Convenient Lie

A proxy metric starts as a practical compromise. We can’t measure “quality,” so we measure engagement. We can’t measure “impact,” so we measure output. We can’t measure “control,” so we measure possession.

At first, this feels reasonable — even necessary. Systems need signals, and perfect ones are rarely available.

But over time, something subtle shifts.

The proxy stops being a representation of reality and starts becoming its substitute. What began as a shortcut slowly turns into the thing itself. The map replaces the territory, and no one quite remembers when the switch happened.

When the Metric Becomes the Goal

Once something is measurable, it becomes optimizable. And once it becomes optimizable, it inevitably becomes the goal.

This is where systems begin to drift.

In sports, teams optimize for what the numbers reward: more passes, more possession, more statistically efficient shots. On paper, everything improves. The models say the game is better than ever.

ake the NBA as a case study. Over the last decade, the league has been systematically “solved” by analytics: maximize three-point shots, eliminate mid-range attempts, optimize spacing, reduce variance where possible. From a statistical perspective, it’s beautiful — cleaner shot selection, higher efficiency, better expected outcomes per possession.

And we can actually see this shift in the numbers. Three-point attempts exploded from roughly 2.5 per game in the 1979–1980 season to nearly 35 per game by 2019–2020. That’s not evolution — that’s a complete strategic rewrite of the sport. And yet, despite this massive increase in volume, the league-average three-point percentage has remained almost unchanged, hovering around 35–36% for the past two decades.

In other words: we didn’t suddenly get dramatically better at shooting. We just started doing a lot more of the thing the model told us was optimal.

And yet, over the past few years, something uncomfortable has been happening. Despite higher scoring and “more efficient” basketball, NBA viewership has struggled to sustain the levels seen in the 1990s, with a long-term downward trend that no amount of offensive optimization seems to reverse.

Fans are noticing it too. The complaint isn’t that the game is worse in a measurable sense — it’s that it feels worse. More repetitive. More predictable. Less… human.

Not worse in a measurable sense — better, in fact. More efficient. More optimized. More aligned with what the models recommend.

But also more predictable. More homogeneous. Less expressive.

When every team converges on the same “optimal” strategy, the game stops being a competition of styles and becomes an execution of the same spreadsheet with different jerseys

The metric improved. The experience degraded. We didn’t improve the game.

We optimized the metric that was supposed to describe it.

Engagement Is Not Value

Social media followed the exact same trajectory.

We couldn’t measure meaning, depth, or long-term value, so we measured engagement instead — clicks, likes, shares, comments. That choice looked harmless at first. It gave us numbers, comparability, something to optimize.

And the system did exactly what systems do: it adapted to the signal it was given. Not toward truth or usefulness, but toward whatever spreads fastest and triggers the strongest reaction.

Outrage beats nuance, emotion beats accuracy, and certainty beats actual thinking. Over time, the system becomes extremely good at producing engagement — and increasingly bad at producing anything else.

The metric improves while reality quietly degrades.

The Optimization Trap

The moment a metric becomes a target, reality quietly leaves the room.

People stop doing the thing itself and start doing the measurable version of the thing. Developers stop building systems and start producing output. Teams stop creating value and start hitting KPIs. Products stop solving problems and start maximizing engagement.

From the outside, everything still looks like progress — because the numbers continue to move in the right direction.

But the system has already shifted underneath.

Institutional Amnesia

What makes this pattern particularly interesting is not that it happens — it’s that we keep rediscovering it.

In the 1980s, measuring developer productivity by lines of code was already recognized as nonsense. More code didn’t mean better systems; if anything, it meant more complexity, more bugs, and more maintenance overhead.

Today, with better tools and significantly worse memory, we celebrate generating tens of thousands of lines of code per week.

Same idea. Better marketing.

We didn’t forget the lesson. We just reintroduced the mistake under a different name.

Why This Keeps Happening

This isn’t a failure of intelligence. It’s a property of systems.

Metrics are easy to report, easy to compare, and easy to scale. They fit neatly into dashboards, slide decks, and executive summaries.

Reality, on the other hand, is messy, contextual, and resistant to simplification. It doesn’t compress well into a single number, and it rarely aligns with quarterly reporting cycles.

So when forced to choose, we don’t pick accuracy.

We pick convenience.

The Real Cost

When you replace reality with a metric, two things happen simultaneously: the metric improves, and reality degrades.

But only one of those shows up in the report.

By the time the gap becomes visible — through failures, regressions, or systems that feel inexplicably worse despite “better” numbers — it’s usually too late. The system has already adapted to protect the metric, not the outcome it was supposed to represent.

At that point, you are no longer measuring reality.

You are maintaining an illusion.